- Home

- About

- Contact

- Squared 5 mpeg streamclip download

- Principal definition insurance

- Allow inbound tcp network connections on mac

- Snipping tool on apple

- Calculate standard error of regression

- Best free app to open jpg files on windows 10

- Filmora for mac crack

- James arthur impossible unplugged at radio regenbogen

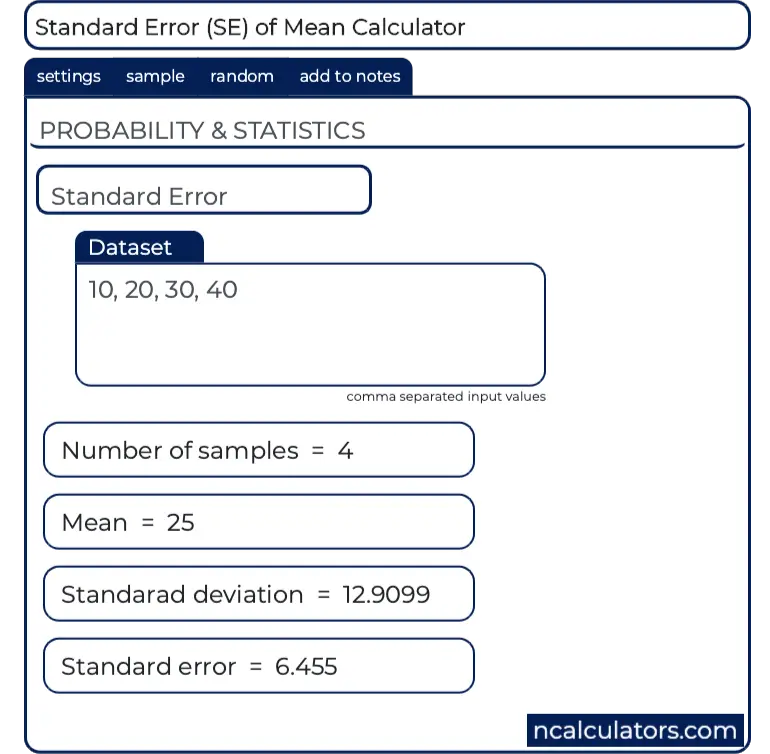

We have that y 0.8861 (average sample standard deviation (of the dependent variable)), so M S T y 2 0.7852 (average sample variance) and it then follows that. Standard error statistics are a class of inferential statistics that. This is because as the sample size increases, sample means cluster more closely around the population mean. We already know that S S R 39.3601, so in order to compute R 2 using the simple formula R 2 1 S S R S S T we only have to determine S S T.

Both the coefficient of determination and the standard error of the regression measure. Mathematically, the variance of the sampling distribution obtained is equal to the variance of the population divided by the sample size. However, it is possible to calculate both from the provided data. The intensity of radiation of 3 radioactive substance was measured at half-life interval The results were as follows t (years) 1.5 2.0 2.5 3.0 3.5 4.0 4,5 5.0 5.5 000 0.994 0.990 0.985 0.979 0.977 0.972 0.969 0.967 0.960 Io.956 0.952 Where Y is the relative intensity of radiation Knowing the radioactivity decays exponentially with time: Mt ) ae Linearize the model and find and 8. eq14.16: Standard error of the estimate: s. This forms a distribution of different means, and this distribution has its own mean and variance. Simple Linear Regression: eq14.4: Estimated Simple Regression Equation: y b0 + b1x eq14.6: The Slope b1. The sampling distribution of a mean is generated by repeated sampling from the same population and recording of the sample means obtained. If the statistic is the sample mean, it is called the standard error of the mean ( SEM). The standard error ( SE) of a statistic (usually an estimate of a parameter) is the standard deviation of its sampling distribution or an estimate of that standard deviation. Calculate Residual SS SS Deviation from Regression. This is where the standard error in regression output comes from.For a value that is sampled with an unbiased normally distributed error, the above depicts the proportion of samples that would fall between 0, 1, 2, and 3 standard deviations above and below the actual value. The number calculated for b1, the regression coefficient, indicates that for.

So, from our one sample, we can compute an estimate of $\beta_1$ and an estimate of the standard error. Standard Deviation First, take the square of the difference between each data point and the sample mean, finding the sum of those values. \hat_1$ in answer is a good estimate of the true standard error, even though we're only calculating it from one sample. OLS estimators of $\beta_1$ and $\beta_2$ are given by

From $n$ observations, where $\epsilon_i$ are iid and of same variance $\sigma^2$. The standard error of the regression provides the absolute measure of the typical distance that the data points fall from the regression line.